LLM Powered Autonomous Agents

tags

论文

date

Feb 1, 2024

slug

paper-LLM-Powered-Autonomous-Agents

status

Published

summary

使用LLM来作为agent的大脑

type

Post

cover

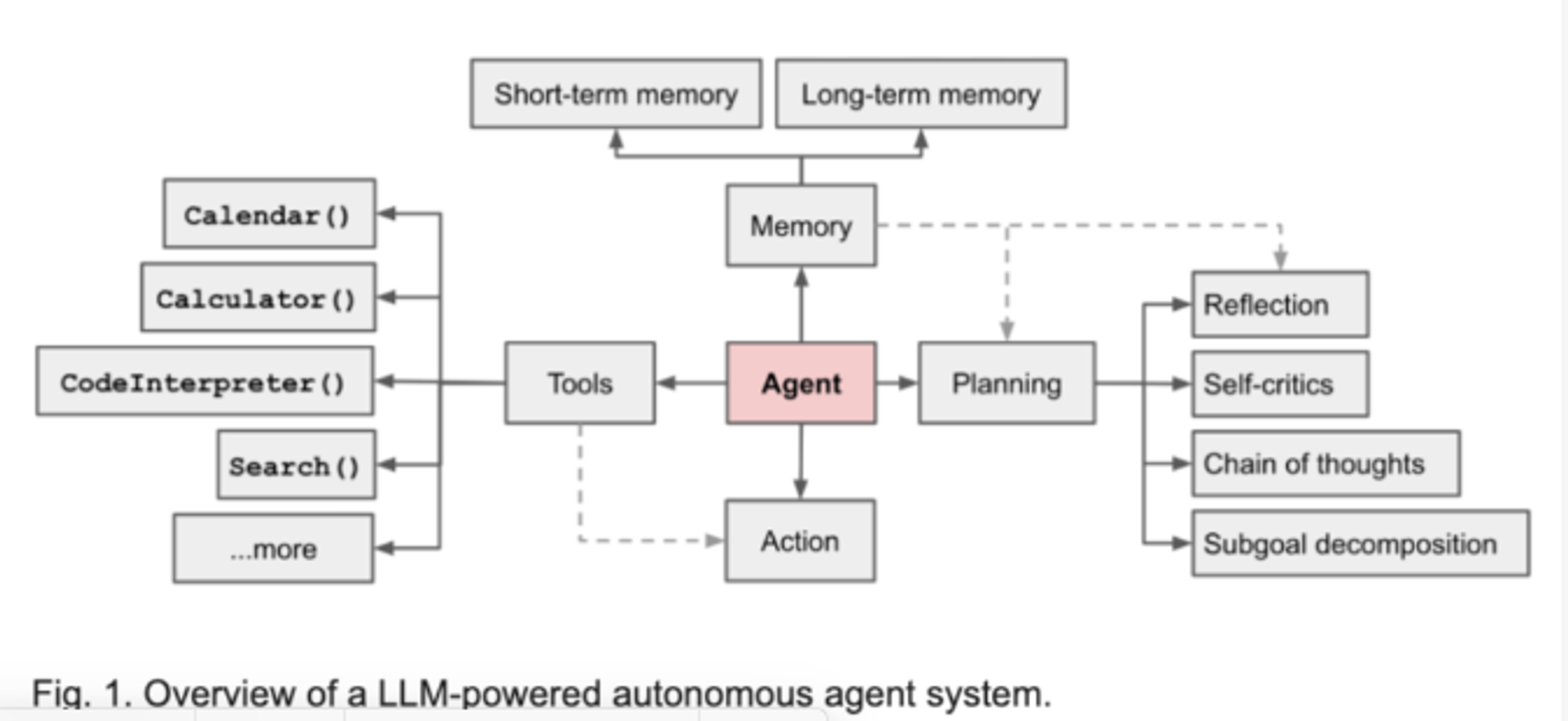

1. Agent System overview

LLM functions as the agent’s brain, complemented by several key components

- 计划:任务分解,自我学习

- 记忆:短期(上下文),长期(信息获取)

- 工具:APIs for 额外的信息

2. Planing

2.1 Task Decomposition

- 链式思维:

The model is instructed to “think step by step” to utilize more test-time computation to decompose hard tasks into smaller and simpler steps.

- 树型思维:

- 自省,在迭代中通过复盘来进行能力提升。

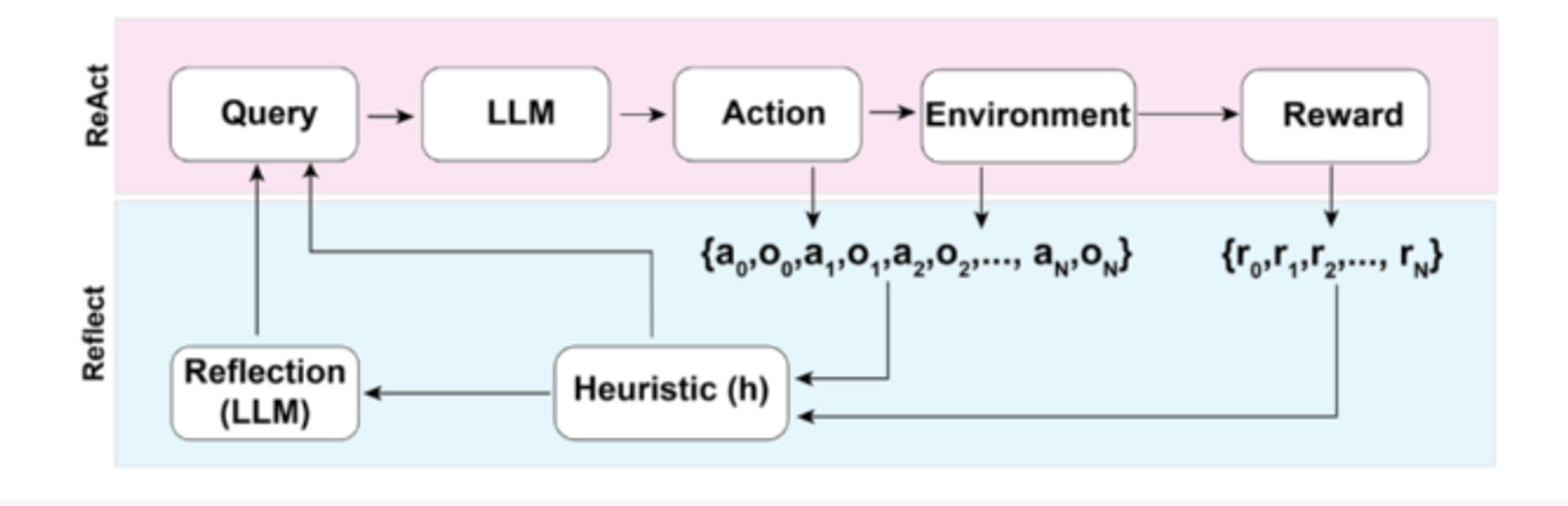

- ReAct

- Reflection

- Chain of Hindsight

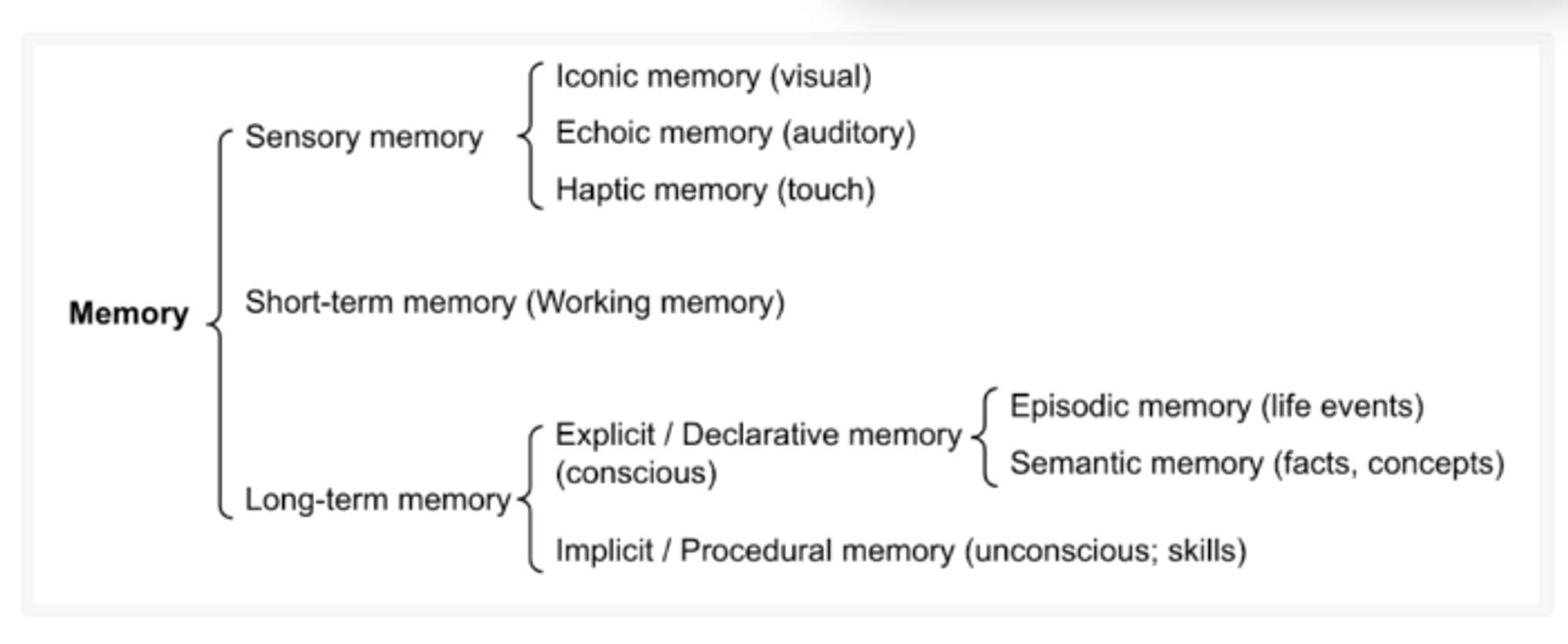

- Sensory Memory(早期,图像/声音):

- Short-Term Memory(STM):

- Long-Term Memory (LTM) :

- Explicit/Declarative memory: life events, facts and concepts

- Implicit/Procedural memory: unconscious and involves skills and routines that are performed automatically

- 一些算法(暂时不关注这部分内容,不是我的领域):

- LSH

- ANNOY

- HNSW

- FAISS

- ScaNN

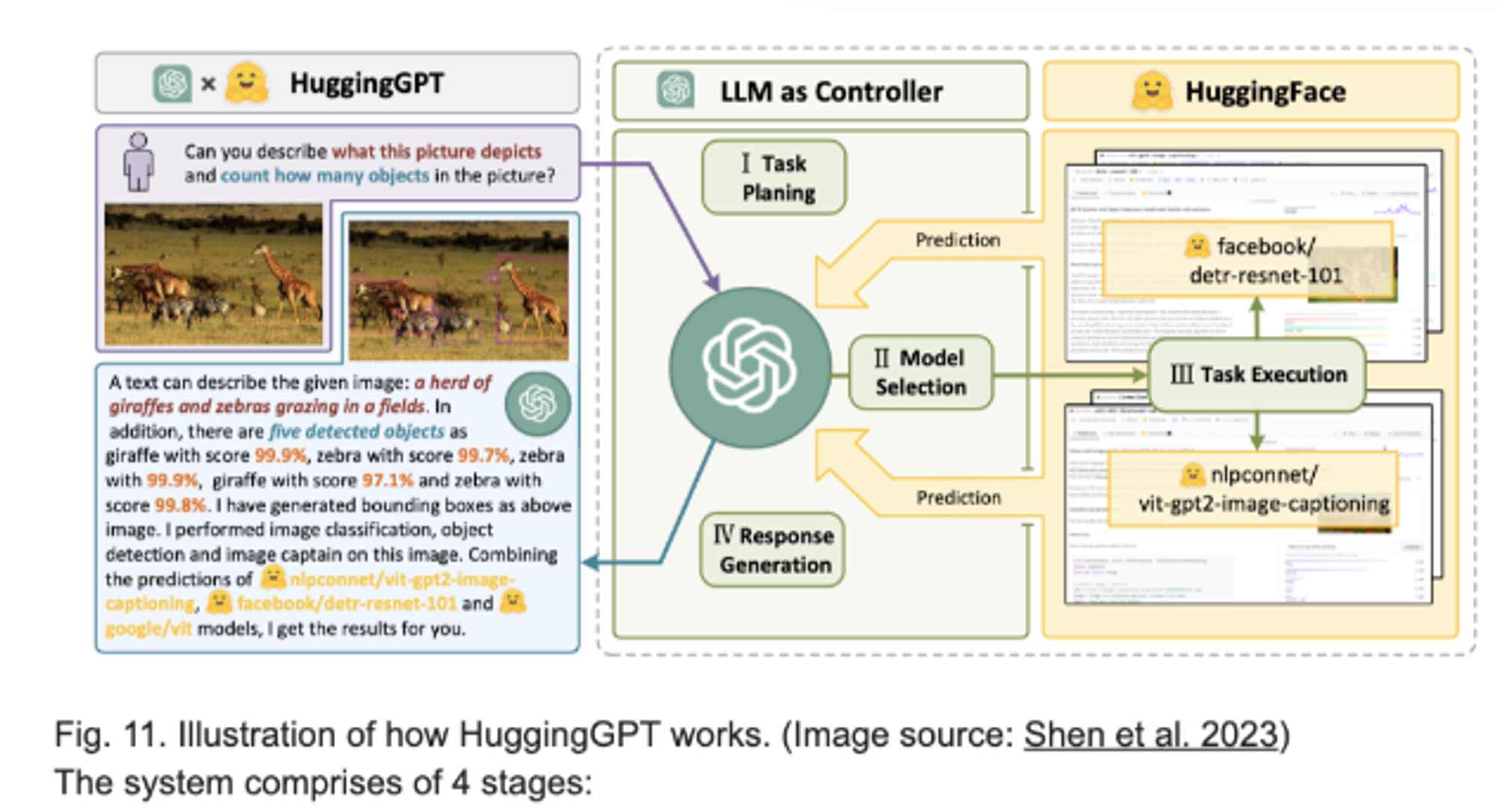

- HuggingGPT

by exploring multiple reasoning possibilities at each step

2.2 Self-Reflection

Self-reflection is a vital aspect that allows autonomous agents to improve iteratively by refining past action decisions and correcting previous mistakes.

integrates reasoning and acting within LLM by extending the action space to be a combination of task-specific discrete actions and the language space. 融合任务行动空间和语言空间。

a framework to equips agents with dynamic memory and self-reflection capabilities to improve reasoning skills. Reflexion has a standard RL setup, in which the reward model provides a simple binary reward and the action space follows the setup in ReAct where the task-specific action space is augmented with language to enable complex reasoning steps. 强化学习机制+LLM 实现可理解 self-reflection。

encourages the model to improve on its own outputs by explicitly presenting it with a sequence of past outputs, each annotated with feedback. 事后复盘

3. Memory

3.1 Type of Memory

This is the earliest stage of memory, providing the ability to retain impressions of sensory information (visual, auditory, etc) after the original stimuli have ended.

It stores information that we are currently aware of and needed to carry out complex cognitive tasks such as learning and reasoning.

3.2 Maximum Inner Product Search(MIPS)

A standard practice is to save the embedding representation of information into a vector store database that can support fast maximum inner-product search (MIPS).

4.Tool Use

Equipping LLMs with external tools can significantly extend the model capabilities.

LLM as Controller 。

5. Cases

.